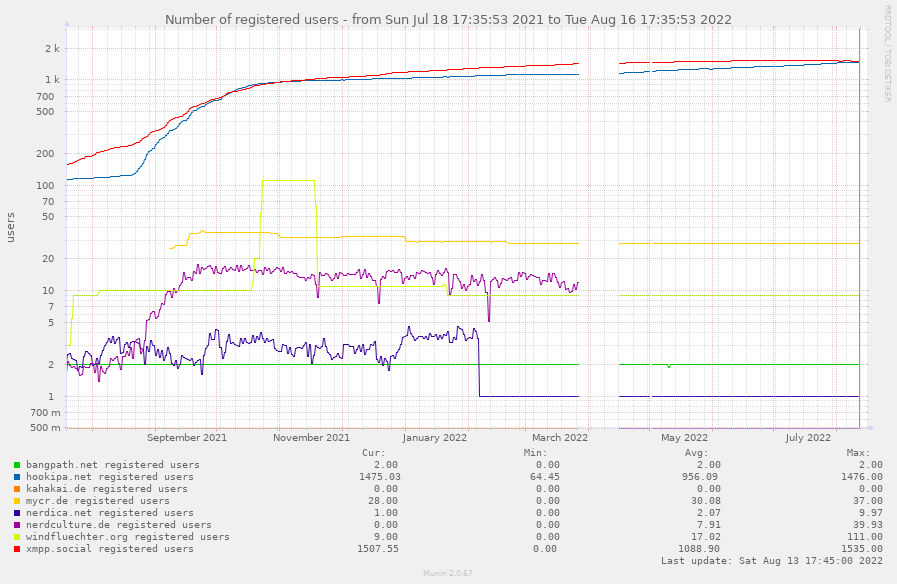

Interesting in this is, that for some time xmpp.social seemed to be the domain of choice for many users, maybe because of “xmpp” and “social” in the domain name – or because it is easier to name it than “hookipa” with “double-oh” and “kay”… who knows…

So, the user counts on xmpp.social were rising in a steeper curve than for hookipa.net, so much that I even considered to move over to xmpp.social for the main domain of that website.

But then something happened: the curated list of XMPP providers, which is now available under https://providers.xmpp.net. Since then some client software apps included the list, e.g. uwpx, and the user count was rising on hookipa.net – while it slowed down on xmpp.social.

Here you can see that the red curve of xmpp.social was for a long time above the blue curve of hookipa.net. Approx. in August 2021 something changed and hookipa.net was steadily increasing its user count. After a year, roughly in August 2022 hookipa.net surpassed the user count of xmpp.social.

Reason for this might be that hookipa.net is listed under Class A provider on providers.xmpp.net. Class A just means that some certain criteria is met like open registration and such. It doesn’t say anything whether or not it is a well-operated service. Well, at least not directly.

You can also look at the graphs on https://the-federation.info about the increase on hookipa.net and the stagnation on xmpp.social. There you can see the difference between a service that is listed on such a providers list and one that isn’t listed. Both domains are equally operated on my server and when you visit the Hookipa website you can register for both domains. But currently a downside (for me) of providers.xmpp.net is, that you need to provide the data for your classification on a website. Hookipa has a website, xmpp.social not, because it redirects to hookipa.net. Therefor xmpp.social is not included on providers.xmpp.net and thus is not gaining that many new users than hookipa.net.

I find that quite interesting, how registration counts are shifted from one domain to the other over time and what leads to that shift.

If you want to know which XMPP apps make use of the providers list, you can have a look at https://providers.xmpp.net/apps/.

]]>